Topic 18: Inference for Comparing Many Group Means (ANOVA)

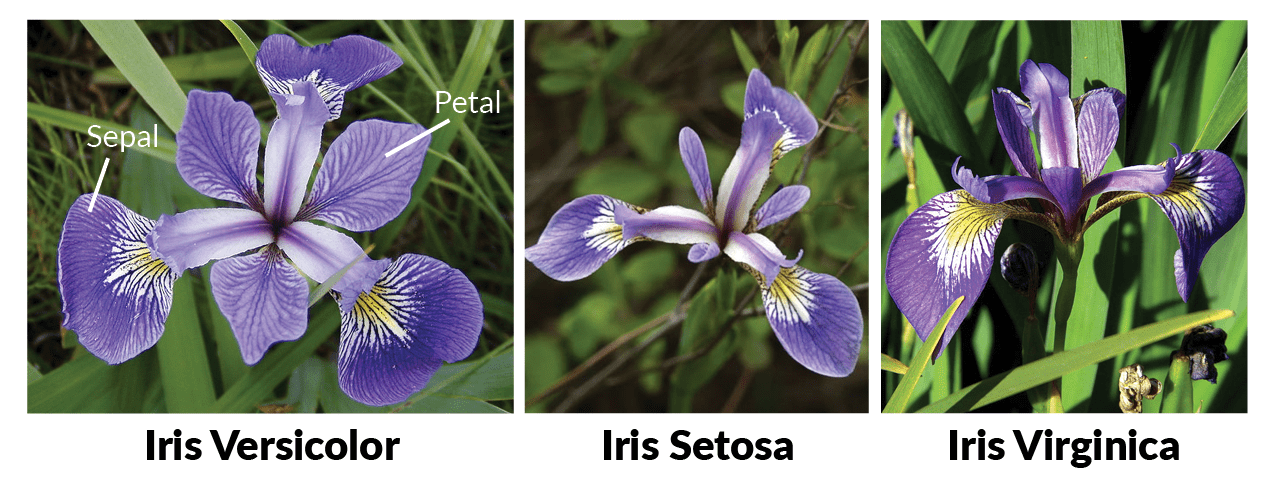

This activity introduces Analysis of Variance (ANOVA) for comparing means across more than two groups simultaneously. We’ll test whether average petal width differs across three varieties of iris using the famous iris dataset collected by Anderson and published by Fisher. We’ll also introduce the Tukey Honest Significant Differences test for pairwise follow-up comparisons.

ANalysis Of VAriance (ANOVA)

In this activity, we consider a method for comparing means from multiple groups. We’ll use a very famous dataset containing measurements on three varieties of iris: setosa, versicolor, and virginica. Our goal is to determine whether the iris data provides significant evidence of a difference in average petal widths across the three varieties.

Notice that none of the tools we’ve encountered so far can be applied directly here. Our hypothesis testing strategies have been limited to comparing numerical measures across one or two populations. We could apply three separate one-versus-one tests (versicolor vs. setosa, versicolor vs. virginica, and setosa vs. virginica), but the probability of an erroneous conclusion grows quickly. At a 5% significance level, the probability of making at least one Type I error across three comparisons is \(1 - (0.95)^3 \approx 14.26\%\). A better option is ANalysis Of VAriance — an ANOVA test.

Watch the video below from Dr. Çetinkaya-Rundel introducing ANOVA.

Dr. Çetinkaya-Rundel’s video walked through the details of ANOVA. The key ideas to carry forward are:

- ANOVA tests whether there is significant evidence of a difference in means across three or more populations.

- The test statistic follows an \(F\)-distribution rather than the normal or \(t\)-distribution.

- The \(p\)-value is the area from the computed \(F\)-statistic into the upper tail of the \(F\)-distribution.

- The interpretation of the \(p\)-value is the same as always — the probability of observing a sample at least as extreme as ours, assuming the null hypothesis is true.

Use the code block below to explore the iris dataset as you answer the questions that follow.

As we’ve done with previous datasets, you can call the name of the data frame or pipe it into functions like head(), dim(), or glimpse().

Which of the following are the correct hypotheses for testing whether average petal widths differ across the three iris varieties?

How many groups are involved in this hypothesis test?

How many degrees of freedom are due to groups?

Use the code block above to find the number of observations in iris. What are the total degrees of freedom?

Running an ANOVA Test in R

Dr. Çetinkaya-Rundel mentions in the video that ANOVA computations are tedious and prone to error — so we use software. In R, the aov() function runs an ANOVA test. Store the result of running aov(Petal.Width ~ Species, data = iris) in an object called ANOVAtable, then view the results by passing that object to summary().

Use the assignment arrow <- to store the result of aov() into ANOVAtable.

ANOVAtable <- ___Now pass ANOVAtable to summary() to view the ANOVA table.

ANOVAtable <- aov(Petal.Width ~ Species, data = iris)

summary(___)ANOVAtable <- aov(Petal.Width ~ Species, data = iris)

summary(ANOVAtable)

ANOVAtable <- aov(Petal.Width ~ Species, data = iris)

summary(ANOVAtable)

ANOVAtable <- aov(Petal.Width ~ Species, data = iris)

summary(ANOVAtable)Use the output to answer the following questions.

How are the Mean Sq values related to the other values in the ANOVA table?

What is the test statistic associated with this ANOVA test?

What is the \(p\)-value associated with this ANOVA test?

What is the conclusion of the test?

What does the conclusion mean in the context of our original question?

The Tukey Test

The ANOVA result tells us that at least one variety has a different average petal width — but it doesn’t tell us which one. The Tukey Honest Significant Differences (HSD) Test can fill that gap. When ANOVA returns a significant result, the Tukey Test runs a collection of pairwise comparisons with adjusted \(p\)-values that account for the multiple comparisons being made.

Since our ANOVA was significant, we’ll follow up with a Tukey Test. In R, we pass the result of aov() directly to TukeyHSD(). Run the code block below and use the output to answer the question that follows.

Which pairs of iris varieties have significantly different average petal widths? Select all that apply.

You may have used the adjusted \(p\)-value column to make your decisions, or you may have noticed that none of the confidence intervals contain 0 — either way leads to the same conclusion. We can also visualize these confidence intervals directly. Run the code block below to produce a plot of the pairwise differences and their confidence intervals.

The dashed vertical line at zero represents no difference. Since all three confidence intervals fall entirely to one side of zero, we have visual confirmation that each pair of varieties differs significantly in average petal width.

Which do you find more useful for communicating the results — the table, the plot, or a combination of both?

Submit

If you are part of a course with an instructor who is grading your work on these activities, please copy and submit both of the hashes below using the method your instructor has requested.

The hash below encodes your responses to the multiple choice and checkbox questions in this activity.

Click the button below to generate your exercise submission code. This hash encodes your work on the graded code exercises in this activity.

You must have attempted the graded exercises before clicking — clicking generates a snapshot of your current results. If you have completed the activity over multiple sessions, please go back through and hit the Run Code button on each graded exercise before generating the hash below, to ensure your most recent results are recorded.

Summary

- ANOVA tests whether there is significant evidence of a difference in means across three or more populations. The null hypothesis states that all group means are equal; the alternative states that at least one differs.

- The \(F\)-statistic is the test statistic for ANOVA. It measures the ratio of variation between groups to variation within groups — a large \(F\) value suggests the group means are more spread out than would be expected by chance alone.

- The \(p\)-value from an ANOVA test is the upper tail area of the \(F\)-distribution beyond the observed \(F\)-statistic. Its interpretation is exactly the same as in our earlier hypothesis tests.

- Running ANOVA in R uses

aov(response ~ group, data = df)followed bysummary()to view the table. - ANOVA only tells you that at least one group mean differs — it does not identify which pair(s). A Tukey HSD Test, run via

TukeyHSD(), performs all pairwise comparisons with adjusted \(p\)-values and should be used as a follow-up when ANOVA is significant. - Why not just run multiple \(t\)-tests? Each additional test inflates the probability of at least one false positive. With three groups and \(\alpha = 0.05\), running all pairwise \(t\)-tests gives a \(1 - 0.95^3 \approx 14\%\) chance of at least one erroneous conclusion. ANOVA and the Tukey correction keep that risk controlled.

The final activity in this series is a regression lab. We’ll shift from comparing group means to modeling the relationship between two numerical variables — using one to predict the other. Linear regression extends the inference framework you’ve built throughout this course into a new and widely-used context.